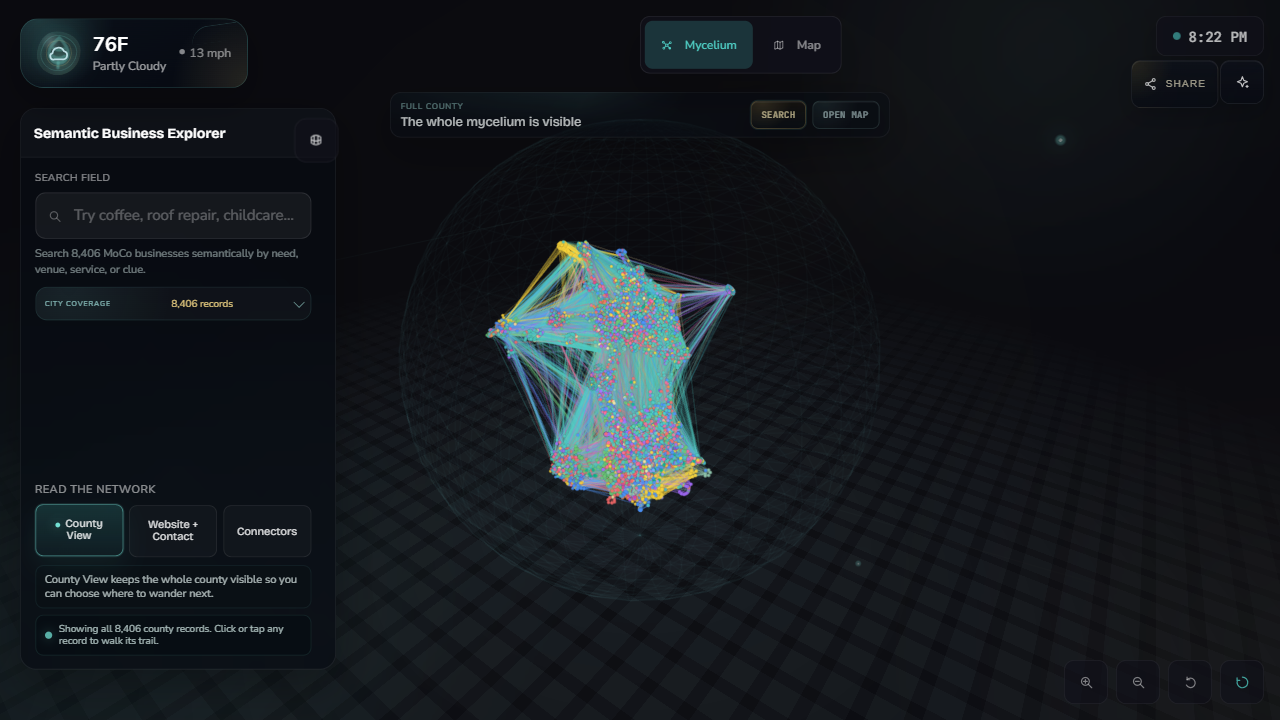

Lead intelligence systems

Public records, website discovery, audit signals, contact paths, and review queues organized into a queryable lead corpus.

- Flow

- Discovery, enrichment, verification, export.

- Output

- A clean lead list, review workflow, CRM import, or dashboard.